The EU plans to let startups train AI models on its HPC supercomputers. Startups seeking access to the EU’s high-power compute resource, which includes pre-exascale and petascale supercomputers, must join its AI governance program.

In May, the EU announced a plan for a stop-gap set of voluntary rules or standards for industry developing and applying AI while formal regulations were developed. The initiative would prepare firms for formal AI rules in a few years.

The AI Act, a risk-based framework for regulating AI applications, is being negotiated by EU co-legislators and expected to be adopted soon. It also initiated work with the US and other international partners on an AI Code of Conduct to help bridge international legislative gaps as countries develop AI governance regimes.

But the EU AI governance strategy includes carrots: high-performance compute for “responsible” AI startups.

A Commission spokesman said the startup-focused plan builds on the EuroHPC Access Calls for proposals process that already allows industry to access supercomputers with “a new initiative to facilitate and support access to European supercomputer capacity for ethical and responsible AI start-ups”.

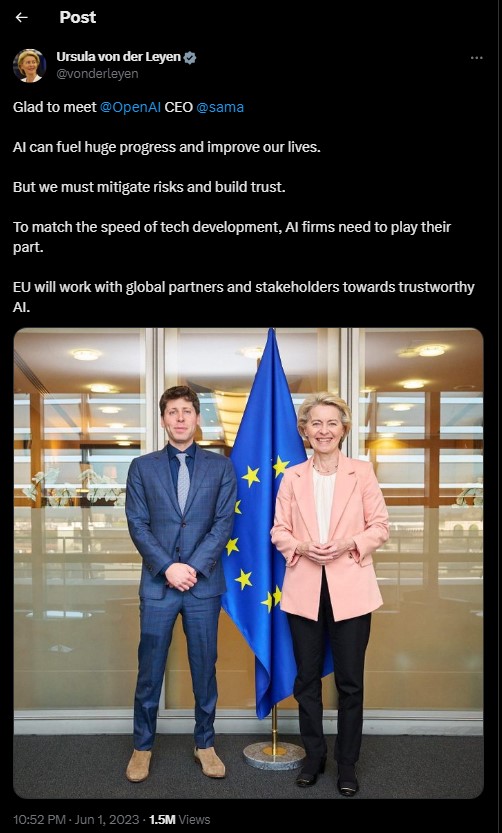

EU president Ursula von der Leyen announced HPC access for AI startups during the annual ‘State of the Union’ address today.

Warning of extinction

The EU president also warned that AI is “moving faster than even its developers anticipated” and that “We have a narrowing window of opportunity to guide this technology responsibly.”

AI will improve healthcare, productivity, and climate change. But we shouldn’t underestimate real threats, she said. “Hundreds of leading AI developers, academics, and experts warned recently that “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war”.”

She promoted the EU’s efforts to pass comprehensive AI governance legislation and suggested establishing a “similar body” to the IPCC to support policymakers globally with research and briefings on the latest science on AI risks, assuming existential concerns.

She said, “I believe Europe, together with partners, should lead the way on a new global framework for AI, built on three pillars: guardrails, governance and guiding innovation.” Her AI Act is already a blueprint for the world. We must quickly adopt the rules and implement them.”

She added: “[W]e should also join forces with our partners to ensure a global approach to understanding the impact of AI in our societies,” expanding on the EU’s AI governance strategy. Consider the IPCC’s vital role in providing policymakers with the latest climate science.

I think AI needs a similar body to assess its risks and benefits for humanity. Scientists, tech companies, and independent experts at the table. This will enable a fast, globally coordinated response, building on the [G7] Hiroshima Process and others.”

Von der Leyen’s mention of existential AI risks is notable, as the EU’s focus on AI safety has been on reducing less theoretical risks from automation, such as physical safety, bias, discrimination, and disinformation, liability issues, and so on.

The high-level intervention on existential AI risk was welcomed by London-based AI safety startup Conjecture.

“Great to see Ursula von der Leyen, Commission president, acknowledged today that AI constitutes an extinction risk, as even the CEOs of the companies developing the largest AI models have admitted on the record,” Andrea Miotti, head of strategy and governance.

With these stakes, the focus can’t be pitting geographies against each other for ‘competitiveness’; it’s stopping proliferation and flattening the capabilities curve.”

EU push for ‘responsible’ AI

Von der Leyen’s address on the third pillar, guiding innovation, followed the plan to give AI startups access to the bloc’s HPC supercomputers for model training and promised more steerage.

Lumi, a pre-exascale HPC supercomputer in Finland; MareNostrum 5, a pre-exascale supercomputer in Spain; and Leonardo, a third pre-exascale supercomputer in Italy, are among the eight supercomputers in the EU, often located in research institutions. Two more powerful exascale supercomputers, Jupiter in Germany and Jules Verne in France, are scheduled to launch soon.

According to her, “Europe has now become a leader in supercomputing — with 3 of the 5 most powerful supercomputers in the world,” thanks to recent investments. “We must capitalize on this. This is why I can announce today a new initiative to train AI start-up models on our high-performance computers. But this will only be part of our innovation guidance. We need open communication with AI developers and deployers. Seven major US tech companies have agreed to voluntary safety, security, and trust rules.

We will work with AI companies to voluntarily commit to the AI Act’s principles before it takes effect. Now we should combine this work to set minimum global AI safety and ethics standards.”

According to the Commission spokesman, scientific institutes, industry, and public administration can apply for and justify their need for “extremely large allocations in terms of compute time, data storage and support resources” to use EuroHPC supercomputers.

He said this EuroHPC JU access policy will be “fine-tuned with the aim to have a dedicated and swifter access track for SMEs and AI startups”.

“The Horizon [research] ethical criterion is already used to evaluate EPC supercomputer access. Similar criteria can be used to select HPC candidates for AI schemes, the spokesman said.

In a LinkedIn blog post, EU internal market commissioner Thierry Breton wrote: “[W]e will launch the EU AI Start-Up Initiative, leveraging one of Europe’s biggest assets: Its public high-performance computing infrastructure. We will identify the most promising European AI start-ups and provide supercomputing capacity.”

European supercomputing infrastructure will help start-ups train their latest AI models in days or weeks instead of months or years. And it will help them lead the development and scale-up of AI responsibly and in line with European values,” Breton said. The new initiative would build on the Commission’s January launch of Testing and Experimentation Facilities for AI and its focus on Digital Innovation Hubs. He also mentioned regulatory sandboxes under the upcoming AI Act and the European Partnership on AI, Data, and Robotics and HorizonEurope research programs to boost AI research.

The EU initiative to support select startups with HPC for AI model training may give them a competitive edge. The EU is clearly using in-demand resources to promote ‘the right kind of innovation’ (tech that aligns with European values).

AI governance discussion group

In another announcement, Breton’s blog post says the EU will power up an AI talking shop to promote inclusive governance.

“When developing AI governance, we must ensure the involvement of all – big tech, start-ups, businesses using AI across our industrial ecosystems, consumers, NGOs, academic experts and policy-makers,” he wrote. “This is why I will convene the European AI Alliance Assembly in November, bringing all these stakeholders.”

In light of this announcement, the U.K. government’s fall AI Summit to position itself as a global AI safety leader may face regional competition.

The U.K. summit’s attendees are unknown, but ministers have raised concerns that the government is not consulting widely enough. After a series of meetings between the CEOs of the companies and the U.K. prime minister, AI giants Google DeepMind, OpenAI, and Anthropic pledged early/priority access to “frontier” models for U.K. AI safety research.

Breton’s line about ensuring “the involvement of all” in AI governance—“not only big tech, but also start-ups, businesses using AI across our industrial ecosystems, consumers, NGOs, academic experts and policy-makers”—is a jab at the U.K.’s Big Tech-backed approach. (OpenAI CEO Sam Altman met with von der Leyen in June during his European tour, which may explain her sudden focus on “extinction level” AI risk.)

The Commission launched the European AI Alliance in 2018 as an online discussion forum and in-person meetings and workshops that have brought together thousands of stakeholders to establish “an open policy dialogue on artificial intelligence”. This included leading the High-Level Expert Group on AI, which informed the Commission’s AI Act policymaking.

Since 2019, the AI Alliance has existed. The Alliance hasn’t met in two years, so commissioner Breton decided to convene it again, the Commission’s spokesman said. “The November Assembly will be crucial to AI Act adoption. The AI Act & AI Pact and our efforts to promote excellence and trust in AI will be prioritized.”

Tech Gadget Central Latest Tech News and Reviews

Tech Gadget Central Latest Tech News and Reviews